CME Test Questions: How to Write Assessment Items That Measure Real Outcomes

Article Overview: Test questions are an impactful part of measuring educational outcomes in a meaningful way. Good test questions only ask about one objective and are aligned with educational activity. Test questions fall short when they are out of alignment with the activity, use vague stems, bad distractors, or don’t reflect the outcome they claim to.

If you're learning how to be a CME writer, assessment design is one of the skills that signals you understand the full scope of the work, not just the content development piece. Treating test questions like an afterthought is common. They’re often tacked onto content development and may be the last thing you write before sending a piece of content off to your client. However, as Angelique Vinther says in WriteCME Roadmap, writing and validating test questions is a skill unto itself, and most CME professionals are only scratching the surface.

Pre- and post-test questions are one of the most visible indicators of whether an education activity works. Using frameworks like Moore’s Outcomes or Bloom’s Taxonomy, every test question you write should be an attempt to answer, what has changed as a result of the education activity or intervention? If a test question isn’t measuring that, it's not measuring anything that matters.

In this blog, we’ll explore what makes CME assessment items effective, where most writers go wrong, and how to build the skill of writing test questions that measure meaningful outcomes that can affect future education.

Not sure where you stand in CME writing - or what your next step should be? Check your readiness and find your next best step in just two minutes.

Good CME test questions start with alignment

The foundation of every good question starts with looking at the activity itself.

Backward Design: Know what the activity is trying to change

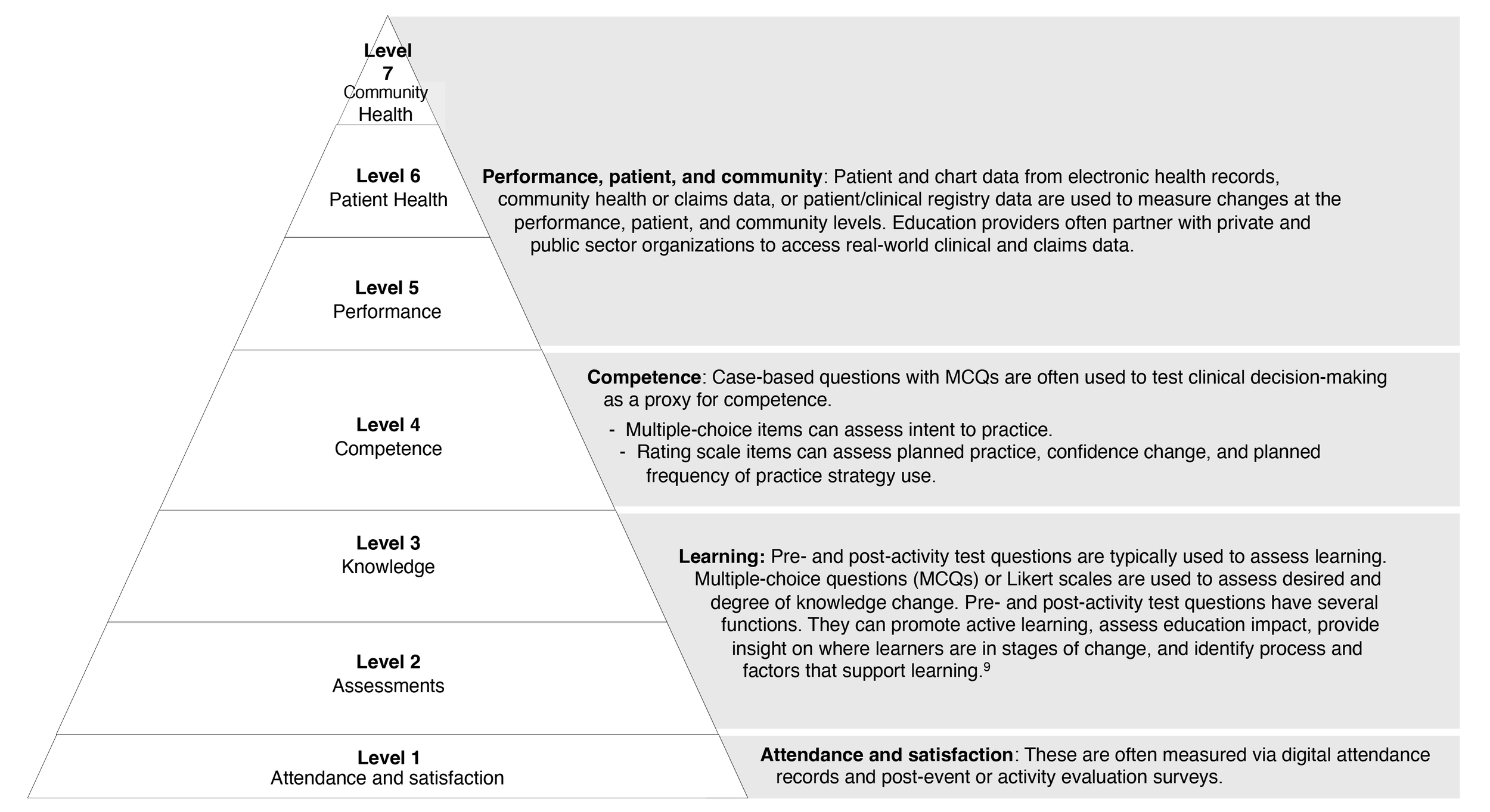

When we craft assessment questions, we’re “designing with the end in mind.” Moore's outcomes levels provide a way to make this alignment concrete rather than abstract. Look at the desired outcome first, then design the assessment question that would demonstrate the outcomes, based on the content itself.

Pro tip: Write questions while developing content rather than waiting until the end. This reinforces backward design and catches the most common workflow mistake. It also creates greater cohesion if you design the education with checkpoint questions built in to the activity to keep learners engaged.

Source: Moore’s outcomes levels. Bhaval Shah in Howson, A. WriteCME Roadmap: How to Thrive in CME with No Experience, No Network, and No Clue

Match the assessment to the activity format

Moore’s framework provides distinct outcome levels — from participation and satisfaction through knowledge, competence, performance, and patient health. The test question should match the outcomes level the activity is designed to achieve.

For example, if the goal is introducing learners to a new concept, questions that test knowledge retention would be appropriate. But if the learning is designed to address a gap in competence or performance, your questions will likely be better if you give learners clinical vignettes and then ask them what an appropriate intervention would be.

“We care about how people change, and we care about how they learn. While behavior change is the holy grail in CME, it’s not always attainable in a 45 or 60 minute activity. So we have to ask, what is attainable? What can we realistically measure?”

4 common reasons CME test questions fall flat

CME test questions may feel like an afterthought, but the data they contribute to outcomes reporting is invaluable in moving education forward. There is also now greater sophistication in the field’s ability to gather, analyze, and report outcomes data; however, there is wide variation in the types and formats of outcomes data generated and reported. However it’s reported, your outcomes data is only as good as the questions you write. So here are four things to avoid so yours don’t miss the mark.

They test trivia instead of judgment

This is the most common failure of assessment questions. Sometimes, questions that test recall do align with the desired outcome. But a question that asks learners to recall a specific statistic or clinical trial name doesn't tell you whether they can apply the underlying concept. If a learner can answer the question without having completed the activity just by searching, the item isn't measuring learning from the activity. It's measuring memory. And memory doesn't demonstrate competence.

As Wendy Cerenzia and Emily Belcher note on the Write Medicine podcast, “When test questions only measure recall, the outcomes data they generate feeds directly into what outcomes researchers have called the field's central challenge — reports filled with data but no context. Weak items don't just produce weak individual data points. They contribute to a systemic problem in how the field demonstrates value.”

The stem is vague, overloaded, or trying to do too much

A stem that includes unnecessary clinical details, multiple decision points, or ambiguous phrasing makes the item harder for the wrong reasons. The challenge should come from the clinical reasoning required, not from parsing a confusing sentence. The Vanderbilt guide emphasizes succinct, positively worded stems that present a single clear problem. A question that measures two things measures neither of them well — which maps directly to Angelique Vinther’s strategy that each question should measure only one task.

The distractors are weak or unintentionally give away the answer

If three of four options are obviously wrong — implausible, grammatically inconsistent with the stem, or absurdly different in length — the learner doesn't need to reason through the question. They just eliminate. Strong distractors represent common misconceptions, outdated practices, or reasonable-but-not-best approaches. They should feel plausible to someone who hasn't fully integrated the learning.

The item does not actually reflect the claimed outcome

This is a specific symptom of failure to align the activity with the assessment questions. If the activity claims to improve clinical decision-making, but the post-test questions only assess factual recall, the outcomes data will show "improvement" that doesn't actually demonstrate practice change. As a result of lack of alignment, the outcomes data will not be meaningful in informing future learning.

Learn how to craft questions that meaningfully measure outcomes during our four week Test Question Practice Lab with Angelique Vinther.

Join us starting May 7th.

What strong CME assessment items actually do

Now that we know what weak questions look like, what makes questions strong?

They assess application, not just recognition

Strong questions assess a learner’s ability to apply what they’ve learned to a clinical context. While questions that assess recall and understanding can be appropriate, for any learning tied to an outcome that measures competence or performance the learner’s ability to integrate knowledge into their daily practice needs to be assessed.

They are tied to one clear objective

Strong CME content addresses clear practice gaps. Likewise, a good assessment question will be tied to one learning objective or task in order to measure that task well. The key is to ask the learner to apply one core piece of knowledge within the context of the question.

They ask the learner to think in context

Engagement/polling/reflective questions serve a formative function during the activity itself — maintaining learner attention, guiding remedial content, showing what other learners chose, and providing rationales. These aren't post-test items, but understanding them helps writers see that different question types serve different educational purposes at different moments in the learning arc.

Knowledge-based questions measure recall and basic understanding. For example: "Which of the following is a common symptom of COVID-19?" These are the standard MCQs most writers default to. But if the activity claims to improve clinical decision-making, a knowledge-based question won't measure that outcome. This question you whether the learner remembers a fact. It doesn't tell you whether they can apply this knowledge.

Application/competence questions assess whether learners can apply what they learned in a clinical context. These require a scenario or vignette that puts the learner in a decision-making position. For example: "A 45-year-old patient presents with fever, cough, and difficulty breathing. Based on current guidelines, what is the most appropriate next step in management?" This is where case-based CME questions earn their value. The vignette creates the conditions for clinical reasoning, not just recognition.

Guidance from the National Board of Medical Examiners on writing case-based items provides one of the most rigorous frameworks for this kind of assessment design. It's designed for academic exam writers, but CME writers who understand it have a significant advantage.

Beyond MCQs: Self-Efficacy and Commitment-to-Change

Most writers hear "test questions" and think exclusively about multiple-choice items. But CME assessment has a broader toolkit. Self-efficacy, intent to change, and commitment to change are all used as indicators of behavior change resulting from CME activities. In fact, outcomes specialist Katie Lucero PhD, Medscape’s Chief Impact Officer, has noted that self-efficacy can be a better predictor of behavior change than knowledge or competence scores.

These items typically use a Likert scale to gauge a learner's confidence in performing specific clinical tasks before and after an activity. For example: "How confident are you in your ability to treat a patient who has adverse outcomes from COVID-19?" — rated on a scale from not confident to very confident.

Write Better Test Questions with the Next WriteCME Pro Practice Lab

If you realized your test questions have been doing more box-checking than outcome-measuring, you have a practice gap. Knowledge alone doesn't change how you write assessment items. Structured practice with expert feedback does.

WriteCME Pro’s upcoming Test Question Practice Lab with expert Angelique Vinther gives you that: a focused environment to draft, refine, and get direct feedback on CME assessment items — so the next time you submit a post-test, it demonstrates educational design competence, not just content coverage.